|

| Wiki Chip: 14 nm lithography process |

Topics: Electrical Engineering, Moore's Law, Nanotechnology, Semiconductor Technology

It was hard to tell at the time — with the distraction of the Y2K bug, the explosion of reality television, and the popularity of post-grunge music — that the turn of the millennium was also the beginning of the end of easy computing improvements. A golden age of computing, which powered intensive data and computational science for decades, would soon be slowly drawing to a close. Even with novel ways of assembling computing systems, and new algorithms that take advantage of the architecture, the performance gains as predicted by Moore’s law were bound to come to an end — but in a way few people expected.

Moore’s law is the observation that the number of transistors in dense integrated circuits doubles roughly every two years. Before the turn of the millennium, all a computational scientist needed to do to have more than twice as fast a computer was to wait two years. Calculations that would have been impractical became accessible to desktop users. It was a time of plenty, and many problems could be solved by brute-force computing, from the quantum interactions of particles to the formation of galaxies. Giant lattices could be modeled, and enormous numbers of particles tracked. Improved computers enabled the analysis of genomic variations in entire communities and facilitated the advent of machine-learning techniques in AI.

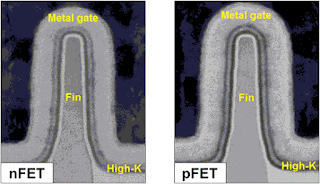

Fundamental physics limits will ultimately put an end to transistor shrinkage in Moore’s law, and we are close to getting there. Today, chip production creates structures in silicon that are 14 nanometers wide and decreasing, and seven-nanometer elements are coming to market. At these sizes, thousands of these elements would fit in the width of a human hair. Feature sizes of less than five nanometers will probably be impossible because of quantum tunneling, in which electrons undesirably leak out of such narrow gaps.

A Reckoning for Moore’s Law

Why upgrading your computer every two years no longer makes sense.

Ian Fisk, Simon's Foundation

Comments